Mar 20, 2019 - The live stream, which was watched by some 200 users, was flagged only 12. Why was the live stream video of the New Zealand twin mosque attack aired for 17 minutes on Facebook? DOWNLOAD THE SCMP APP.

CREDIT: Mark Baker/AP/REX/ShutterstockInternet platforms played a central role in the mass shootings at New Zealand mosques Friday — which — and immediately prompted renewed calls for, and Twitter to take much stronger steps to combat the spread of violent hate speech.One of the attackers live-streamed the attack on Live in a 17-minute video, which showed himself entering the Al Noor Mosque in Christchurch, New Zealand, and shooting multiple people. Prior to the massacre, the individual allegedly had posted a 74-page anti-Muslim manifesto decrying “white genocide” on Twitter and discussion site 8chan, a notorious haven for hate speech. In a forum on 8chan, someone on Friday at 1:30 p.m. New Zealand time posted the message: “I will carry out and attack against the invaders, and will even livestream the attack via Facebook,”. It’s not clear when Facebook removed the video or shut down the accounts in question.

The company from Facebook spokeswoman Mia Garlick, who said in part: “Police alerted us to a video on Facebook shortly after the livestream commenced and we quickly removed both the shooter’s Facebook and Instagram accounts and the video. We’re also removing any praise or support for the crime and the shooter or shooters as soon as we’re aware.”Twitter also disabled the profile of the alleged attacker, and it was “working vigilantly to remove any footage” related to the Christchurch attacks. Still, some of the content posted by the alleged shooter continued to be available for hours afterward, as people cropped the video or posted the text of the manifesto as an image to avoid getting detected by the platforms’ automated systems, the New York Times.The internet giants have repeatedly vowed to crack down on violent extremism and hate-related content.

In 2017, for example, launched a cross-company effort to combat terrorism and extremist material. You really need to do more to stop violent extremism being promoted on your platforms. Take some ownership. Enough is enough— Sajid Javid (@sajidjavid)Similar criticism was leveled by Damian Collins, a member of British Parliament who has been highly critical of Facebook’s business practices of late.

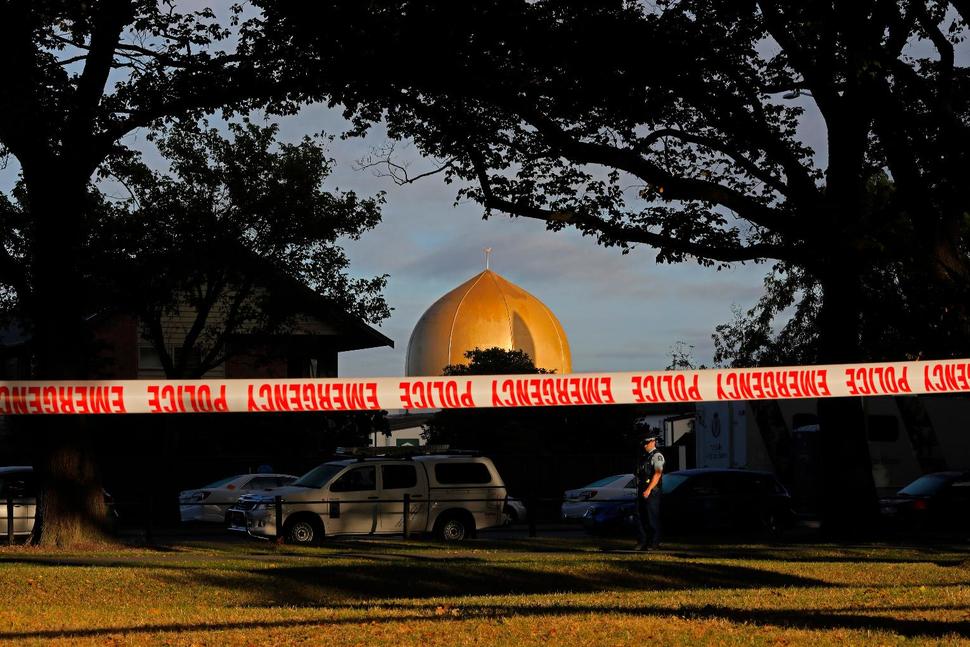

“It’s very distressing that the terrorist attack in New Zealand was live streamed on social media & footage was available hours later,” he said in a. “There must be a serious review of how these films were shared and why more effective action wasn’t taken to remove them.”— Stewart Clarke contributed to this report.Pictured above: Scene outside a mosque in central Christchurch, New Zealand, after a mass shooting killed at least 49 people.